Pc chips are a sizzling commodity. Nvidia is now probably the most beneficial firms on this planet, and the Taiwanese producer of Nvidia’s chips, TSMC, has been known as a geopolitical drive. It ought to come as no shock, then, {that a} rising variety of {hardware} startups and established firms want to take a jewel or two from the crown.

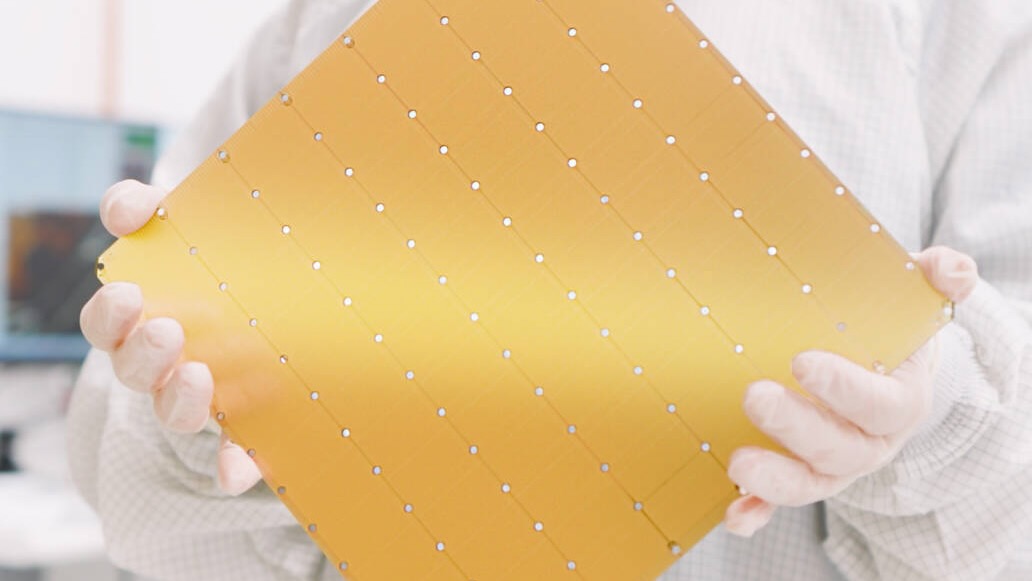

Of those, Cerebras is likely one of the weirdest. The corporate makes pc chips the scale of tortillas bristling with just below 1,000,000 processors, every linked to its personal native reminiscence. The processors are small however lightning fast as they don’t shuttle info to and from shared reminiscence positioned far-off. And the connections between processors—which in most supercomputers require linking separate chips throughout room-sized machines—are fast too.

This implies the chips are stellar for particular duties. Current preprint research in two of those—one simulating molecules and the opposite coaching and working giant language fashions—present the wafer-scale benefit could be formidable. The chips outperformed Frontier, the world’s prime supercomputer, within the former. In addition they confirmed a stripped down AI mannequin might use a 3rd of the standard vitality with out sacrificing efficiency.

Molecular Matrix

The supplies we make issues with are essential drivers of know-how. They usher in new prospects by breaking previous limits in energy or warmth resistance. Take fusion energy. If researchers could make it work, the know-how guarantees to be a brand new, clear supply of vitality. However liberating that vitality requires supplies to resist excessive circumstances.

Scientists use supercomputers to mannequin how the metals lining fusion reactors would possibly take care of the warmth. These simulations zoom in on particular person atoms and use the legal guidelines of physics to information their motions and interactions at grand scales. Immediately’s supercomputers can mannequin supplies containing billions and even trillions of atoms with excessive precision.

However whereas the size and high quality of those simulations has progressed lots through the years, their pace has stalled. Because of the approach supercomputers are designed, they will solely mannequin so many interactions per second, and making the machines larger solely compounds the issue. This implies the overall size of molecular simulations has a tough sensible restrict.

Cerebras partnered with Sandia, Lawrence Livermore, and Los Alamos Nationwide Laboratories to see if a wafer-scale chip might pace issues up.

The group assigned a single simulated atom to every processor. So they might shortly change details about their place, movement, and vitality, the processors modeling atoms that might be bodily shut in the true world had been neighbors on the chip too. Relying on their properties at any given time, atoms might hop between processors as they moved about.

The group modeled 800,000 atoms in three supplies—copper, tungsten, and tantalum—that is likely to be helpful in fusion reactors. The outcomes had been fairly gorgeous, with simulations of tantalum yielding a 179-fold speedup over the Frontier supercomputer. Which means the chip might crunch a 12 months’s value of labor on a supercomputer into a couple of days and considerably prolong the size of simulation from microseconds to milliseconds. It was additionally vastly extra environment friendly on the activity.

“I’ve been working in atomistic simulation of supplies for greater than 20 years. Throughout that point, I’ve participated in huge enhancements in each the scale and accuracy of the simulations. Nevertheless, regardless of all this, we now have been unable to extend the precise simulation charge. The wall-clock time required to run simulations has barely budged within the final 15 years,” Aidan Thompson of Sandia Nationwide Laboratories mentioned in an announcement. “With the Cerebras Wafer-Scale Engine, we will swiftly drive at hypersonic speeds.”

Though the chip will increase modeling pace, it could’t compete on scale. The variety of simulated atoms is restricted to the variety of processors on the chip. Subsequent steps embody assigning a number of atoms to every processor and utilizing new wafer-scale supercomputers that hyperlink 64 Cerebras programs collectively. The group estimates these machines might mannequin as many as 40 million tantalum atoms at speeds just like these within the examine.

AI Mild

Whereas simulating the bodily world could possibly be a core competency for wafer-scale chips, they’ve at all times been centered on synthetic intelligence. The most recent AI fashions have grown exponentially, that means the vitality and value of coaching and working them has exploded. Wafer-scale chips could possibly make AI extra environment friendly.

In a separate examine, researchers from Neural Magic and Cerebras labored to shrink the scale of Meta’s 7-billion-parameter Llama language mannequin. To do that, they made what’s known as a “sparse” AI mannequin the place lots of the algorithm’s parameters are set to zero. In principle, this implies they are often skipped, making the algorithm smaller, sooner, and extra environment friendly. However in the present day’s main AI chips—known as graphics processing models (or GPUs)—learn algorithms in chunks, that means they will’t skip each zeroed out parameter.

As a result of reminiscence is distributed throughout a wafer-scale chip, it can learn each parameter and skip zeroes wherever they happen. Even so, extraordinarily sparse fashions don’t often carry out in addition to dense fashions. However right here, the group discovered a option to get well misplaced efficiency with a bit further coaching. Their mannequin maintained efficiency—even with 70 % of the parameters zeroed out. Working on a Cerebras chip, it sipped a meager 30 % of the vitality and ran in a 3rd of the time of the full-sized mannequin.

Wafer-Scale Wins?

Whereas all that is spectacular, Cerebras remains to be area of interest. Nvidia’s extra typical chips stay firmly in command of the market. No less than for now, that seems unlikely to alter. Corporations have invested closely in experience and infrastructure constructed round Nvidia.

However wafer-scale could proceed to show itself in area of interest, however nonetheless essential, functions in analysis. And it might be the method turns into extra frequent general. The flexibility to make wafer-scale chips is barely now being perfected. In a touch at what’s to return for the sector as a complete, the largest chipmaker on this planet, TSMC, just lately mentioned it’s constructing out its wafer-scale capabilities. This might make the chips extra frequent and succesful.

For his or her half, the group behind the molecular modeling work say wafer-scale’s affect could possibly be extra dramatic. Like GPUs earlier than them, including wafer-scale chips to the supercomputing combine might yield some formidable machines sooner or later.

“Future work will concentrate on extending the strong-scaling effectivity demonstrated right here to facility-level deployments, doubtlessly resulting in a good larger paradigm shift within the Top500 supercomputer listing than that launched by the GPU revolution,” the group wrote of their paper.

Picture Credit score: Cerebras