The DataFrame equality check capabilities had been launched in Apache Spark™ 3.5 and Databricks Runtime 14.2 to simplify PySpark unit testing. The complete set of capabilities described on this weblog put up will likely be obtainable beginning with the upcoming Apache Spark 4.0 and Databricks Runtime 14.3.

Write extra assured DataFrame transformations with DataFrame equality check capabilities

Working with knowledge in PySpark includes making use of transformations, aggregations, and manipulations to DataFrames. As transformations accumulate, how will you be assured that your code works as anticipated? PySpark equality check utility capabilities present an environment friendly and efficient technique to examine your knowledge in opposition to anticipated outcomes, serving to you determine surprising variations and catch errors early within the evaluation course of. What’s extra, they return intuitive data pinpointing exactly the variations so you may take motion instantly with out spending a variety of time debugging.

Utilizing DataFrame equality check capabilities

Two equality check capabilities for PySpark DataFrames had been launched in Apache Spark 3.5: assertDataFrameEqual and assertSchemaEqual. Let’s check out tips on how to use every of them.

assertDataFrameEqual: This operate permits you to examine two PySpark DataFrames for equality with a single line of code, checking whether or not the information and schemas match. It returns descriptive data when there are variations.

Let’s stroll by an instance. First, we’ll create two DataFrames, deliberately introducing a distinction within the first row:

df_expected = spark.createDataFrame(knowledge=[("Alfred", 1500), ("Alfred", 2500), ("Anna",

500), ("Anna", 3000)], schema=["name", "amount"])

df_actual = spark.createDataFrame(knowledge=[("Alfred", 1200), ("Alfred", 2500), ("Anna", 500),

("Anna", 3000)], schema=["name", "amount"])Then we’ll name assertDataFrameEqual with the 2 DataFrames:

from pyspark.testing import assertDataFrameEqual

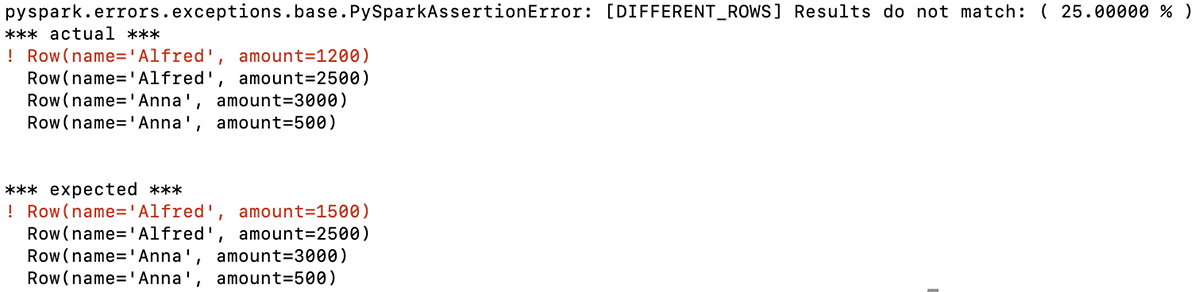

assertDataFrameEqual(df_actual, df_expected)The operate returns a descriptive message indicating that the primary row within the two DataFrames is totally different. On this instance, the primary quantities listed for Alfred on this row should not the identical (anticipated: 1500, precise: 1200):

With this data, you instantly know the issue with the DataFrame your code generated and might goal your debugging based mostly on that.

The operate additionally has a number of choices to regulate the strictness of the DataFrame comparability as a way to alter it in response to your particular use instances.

assertSchemaEqual: This operate compares solely the schemas of two DataFrames; it doesn’t examine row knowledge. It permits you to validate whether or not the column names, knowledge sorts, and nullable property are the identical for 2 totally different DataFrames.

Let us take a look at an instance. First, we’ll create two DataFrames with totally different schemas:

schema_actual = "identify STRING, quantity DOUBLE"

data_expected = [["Alfred", 1500], ["Alfred", 2500], ["Anna", 500], ["Anna", 3000]]

data_actual = [["Alfred", 1500.0], ["Alfred", 2500.0], ["Anna", 500.0], ["Anna", 3000.0]]

df_expected = spark.createDataFrame(knowledge = data_expected)

df_actual = spark.createDataFrame(knowledge = data_actual, schema = schema_actual)Now, let’s name assertSchemaEqual with these two DataFrame schemas:

from pyspark.testing import assertSchemaEqual

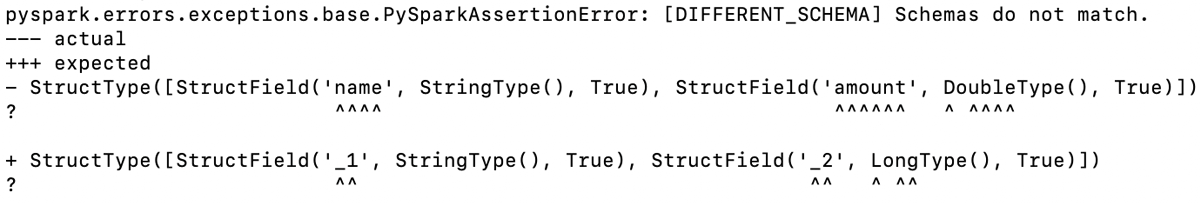

assertSchemaEqual(df_actual.schema, df_expected.schema)The operate determines that the schemas of the 2 DataFrames are totally different, and the output signifies the place they diverge:

On this instance, there are two variations: the information sort of the quantity column is LONG within the precise DataFrame however DOUBLE within the anticipated DataFrame, and since we created the anticipated DataFrame with out specifying a schema, the column names are additionally totally different.

Each of those variations are highlighted within the operate output, as illustrated right here.

assertPandasOnSparkEqual shouldn’t be lined on this weblog put up since it’s deprecated from Apache Spark 3.5.1 and scheduled to be eliminated within the upcoming Apache Spark 4.0.0. For testing Pandas API on Spark, see Pandas API on Spark equality check capabilities.

Structured output for debugging variations in PySpark DataFrames

Whereas the assertDataFrameEqual and assertSchemaEqual capabilities are primarily geared toward unit testing, the place you sometimes use smaller datasets to check your PySpark capabilities, you would possibly use them with DataFrames with greater than only a few rows and columns. In such situations, you may simply retrieve the row knowledge for rows which might be totally different to make additional debugging simpler.

Let’s check out how to do this. We’ll use the identical knowledge we used earlier to create two DataFrames:

df_expected = spark.createDataFrame(knowledge=[("Alfred", 1500), ("Alfred", 2500),

("Anna", 500), ("Anna", 3000)], schema=["name", "amount"])

df_actual = spark.createDataFrame(knowledge=[("Alfred", 1200), ("Alfred", 2500), ("Anna",

500), ("Anna", 3000)], schema=["name", "amount"])And now we’ll seize the information that differs between the 2 DataFrames from the assertion error objects after calling assertDataFrameEqual:

from pyspark.testing import assertDataFrameEqual

from pyspark.errors import PySparkAssertionError

strive:

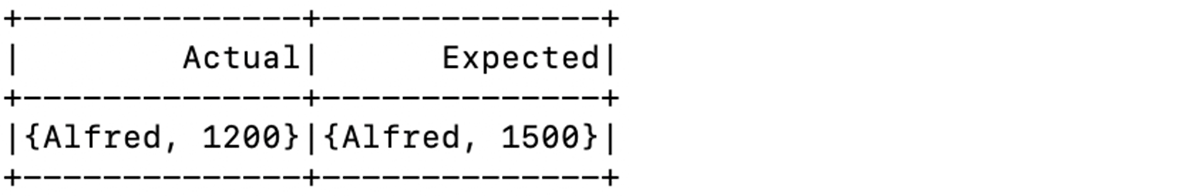

assertDataFrameEqual(df_actual, df_expected, includeDiffRows=True)

besides PySparkAssertionError as e:

# `e.knowledge` right here appears like:

# [(Row(name='Alfred', amount=1200), Row(name='Alfred', amount=1500))]

spark.createDataFrame(e.knowledge, schema=["Actual", "Expected"]).present() Making a DataFrame based mostly on the rows which might be totally different and displaying it, as we have executed on this instance, illustrates how straightforward it’s to entry this data:

As you may see, data on the rows which might be totally different is straight away obtainable for additional evaluation. You not have to put in writing code to extract this data from the precise and anticipated DataFrames for debugging functions.

This characteristic will likely be obtainable from the upcoming Apache Spark 4.0 and DBR 14.3.

Pandas API on Spark equality check capabilities

Along with the capabilities for testing the equality of PySpark DataFrames, Pandas API on Spark customers may have entry to the next DataFrame equality check capabilities:

assert_frame_equalassert_series_equalassert_index_equal

The capabilities present choices for controlling the strictness of comparisons and are nice for unit testing your Pandas API on Spark DataFrames. They supply the very same API because the pandas check utility capabilities, so you should utilize them with out altering present pandas check code that you just wish to run utilizing Pandas API on Spark.

Listed below are a few examples demonstrating the usage of assert_frame_equal with totally different parameters, evaluating Pandas API on Spark DataFrames:

from pyspark.pandas.testing import assert_frame_equal

import pyspark.pandas as ps

# Create two barely totally different Pandas API on Spark DataFrames

df1 = ps.DataFrame({"a": [1, 2, 3], "b": [4.0, 5.0, 6.0]})

df2 = ps.DataFrame({"a": [1, 2, 3], "b": [4, 5, 6]}) # 'b' column as integers

# Validate DataFrame equality with strict knowledge sort checking

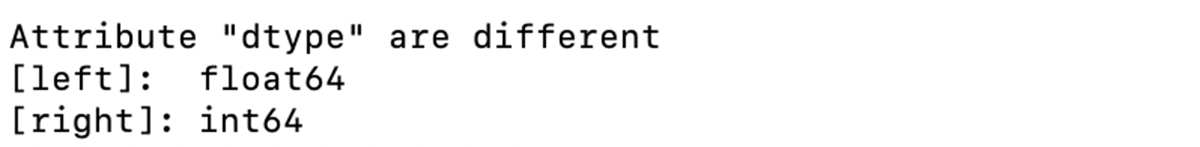

assert_frame_equal(df1, df2, check_dtype=True)On this instance, the schemas of the 2 DataFrames are totally different. The operate output lists the variations, as proven right here:

We will specify that we wish the operate to match column knowledge even when the columns should not have the identical knowledge sort utilizing the check_dtype argument, as on this instance:

# DataFrames are equal with check_dtype=False

assert_frame_equal(df1, df2, check_dtype=False)Since we specified that assert_frame_equal ought to ignore column knowledge sorts, it now considers the 2 DataFrames equal.

These capabilities additionally enable comparisons between Pandas API on Spark objects and pandas objects, facilitating compatibility checks between totally different DataFrame libraries, as illustrated on this instance:

import pandas as pd

# Pandas DataFrame

df_pandas = pd.DataFrame({"a": [1, 2, 3], "b": [4.0, 5.0, 6.0]})

# Evaluating Pandas API on Spark DataFrame with the Pandas DataFrame

assert_frame_equal(df1, df_pandas)

# Evaluating Pandas API on Spark Collection with the Pandas Collection

assert_series_equal(df1.a, df_pandas.a)

# Evaluating Pandas API on Spark Index with the Pandas Index

assert_index_equal(df1.index, df_pandas.index)Utilizing the brand new PySpark DataFrame and Pandas API on Spark equality check capabilities is an effective way to ensure your PySpark code works as anticipated. These capabilities enable you to not solely catch errors but additionally perceive precisely what has gone fallacious, enabling you to shortly and simply determine the place the issue is. Take a look at the Testing PySpark web page for extra data.

These capabilities will likely be obtainable from the upcoming Apache Spark 4.0. DBR 14.2 already helps it.