On this planet of Search engine marketing, URL parameters pose a major drawback.

Whereas builders and knowledge analysts could respect their utility, these question strings are an Search engine marketing headache.

Numerous parameter mixtures can cut up a single person intent throughout hundreds of URL variations. This may trigger issues for crawling, indexing, visibility and, in the end, result in decrease site visitors.

The problem is we will’t merely want them away, which implies it’s essential to grasp easy methods to handle URL parameters in an Search engine marketing-friendly approach.

To take action, we’ll discover:

What Are URL Parameters?

Picture created by writer

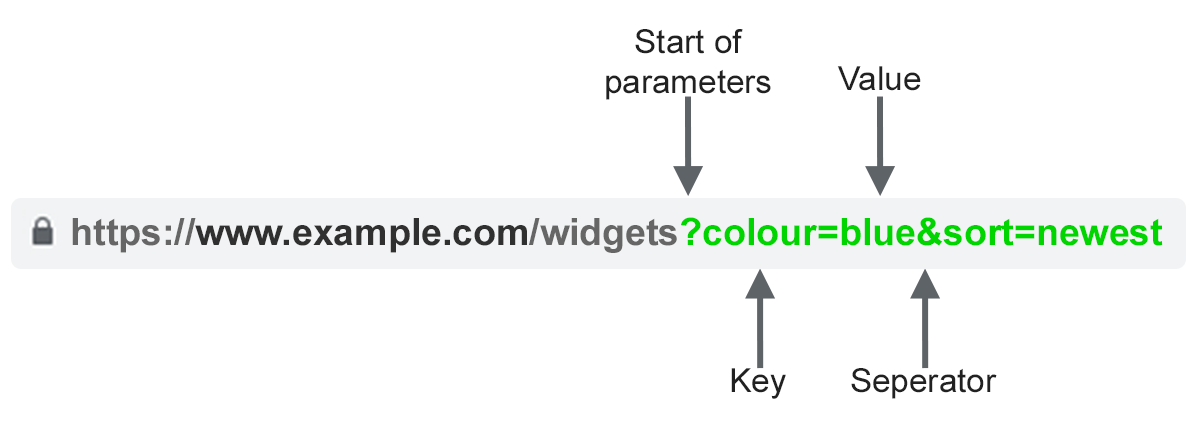

Picture created by writerURL parameters, also called question strings or URI variables, are the portion of a URL that follows the ‘?’ image. They’re comprised of a key and a price pair, separated by an ‘=’ signal. A number of parameters might be added to a single web page when separated by an ‘&’.

The commonest use instances for parameters are:

- Monitoring – For instance ?utm_medium=social, ?sessionid=123 or ?affiliateid=abc

- Reordering – For instance ?kind=lowest-price, ?order=highest-rated or ?so=newest

- Filtering – For instance ?kind=widget, color=purple or ?price-range=20-50

- Figuring out – For instance ?product=small-purple-widget, categoryid=124 or itemid=24AU

- Paginating – For instance, ?web page=2, ?p=2 or viewItems=10-30

- Looking out – For instance, ?question=users-query, ?q=users-query or ?search=drop-down-option

- Translating – For instance, ?lang=fr or ?language=de

Search engine marketing Points With URL Parameters

1. Parameters Create Duplicate Content material

Usually, URL parameters make no important change to the content material of a web page.

A re-ordered model of the web page is usually not so totally different from the unique. A web page URL with monitoring tags or a session ID is an identical to the unique.

For instance, the next URLs would all return a group of widgets.

- Static URL: https://www.instance.com/widgets

- Monitoring parameter: https://www.instance.com/widgets?sessionID=32764

- Reordering parameter: https://www.instance.com/widgets?kind=newest

- Figuring out parameter: https://www.instance.com?class=widgets

- Looking out parameter: https://www.instance.com/merchandise?search=widget

That’s fairly a couple of URLs for what’s successfully the identical content material – now think about this over each class in your web site. It could possibly actually add up.

The problem is that search engines like google and yahoo deal with each parameter-based URL as a brand new web page. So, they see a number of variations of the identical web page, all serving duplicate content material and all concentrating on the identical search intent or semantic matter.

Whereas such duplication is unlikely to trigger a web site to be fully filtered out of the search outcomes, it does result in key phrase cannibalization and will downgrade Google’s view of your general web site high quality, as these further URLs add no actual worth.

2. Parameters Cut back Crawl Efficacy

Crawling redundant parameter pages distracts Googlebot, decreasing your web site’s means to index Search engine marketing-relevant pages and rising server load.

Google sums up this level completely.

“Overly advanced URLs, particularly these containing a number of parameters, could cause a issues for crawlers by creating unnecessarily excessive numbers of URLs that time to an identical or related content material in your web site.

Consequently, Googlebot could eat rather more bandwidth than mandatory, or could also be unable to fully index all of the content material in your web site.”

3. Parameters Cut up Web page Rating Indicators

When you have a number of permutations of the identical web page content material, hyperlinks and social shares could also be coming in on numerous variations.

This dilutes your rating alerts. Once you confuse a crawler, it turns into uncertain which of the competing pages to index for the search question.

4. Parameters Make URLs Much less Clickable

Picture created by writer

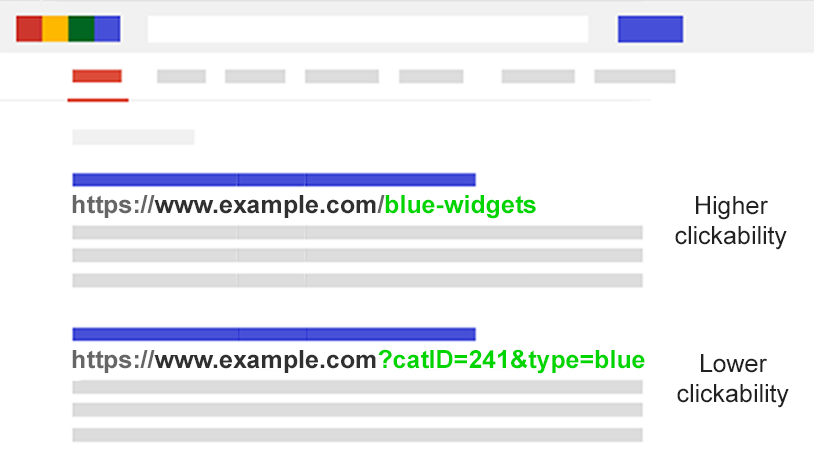

Picture created by writerLet’s face it: parameter URLs are ugly. They’re laborious to learn. They don’t appear as reliable. As such, they’re barely much less more likely to be clicked.

This will influence web page efficiency. Not solely as a result of CTR influences rankings, but in addition as a result of it’s much less clickable in AI chatbots, social media, in emails, when copy-pasted into boards, or wherever else the total URL could also be displayed.

Whereas this may occasionally solely have a fractional influence on a single web page’s amplification, each tweet, like, share, e-mail, hyperlink, and point out issues for the area.

Poor URL readability might contribute to a lower in model engagement.

Assess The Extent Of Your Parameter Downside

It’s vital to know each parameter used in your web site. However chances are high your builders don’t maintain an up-to-date listing.

So how do you discover all of the parameters that want dealing with? Or perceive how search engines like google and yahoo crawl and index such pages? Know the worth they carry to customers?

Comply with these 5 steps:

- Run a crawler: With a software like Screaming Frog, you possibly can seek for “?” within the URL.

- Evaluate your log recordsdata: See if Googlebot is crawling parameter-based URLs.

- Look within the Google Search Console web page indexing report: Within the samples of index and related non-indexed exclusions, seek for ‘?’ within the URL.

- Search with web site: inurl: superior operators: Understand how Google is indexing the parameters you discovered by placing the important thing in a web site:instance.com inurl:key mixture question.

- Look in Google Analytics all pages report: Seek for “?” to see how every of the parameters you discovered are utilized by customers. Make sure you examine that URL question parameters haven’t been excluded within the view setting.

Armed with this knowledge, now you can determine easy methods to greatest deal with every of your web site’s parameters.

Search engine marketing Options To Tame URL Parameters

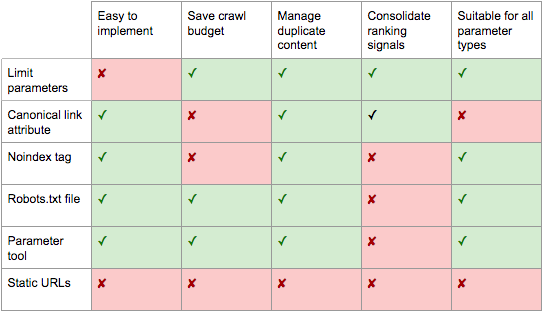

You could have six instruments in your Search engine marketing arsenal to cope with URL parameters on a strategic stage.

Restrict Parameter-based URLs

A easy assessment of how and why parameters are generated can present an Search engine marketing fast win.

You’ll usually discover methods to scale back the variety of parameter URLs and thus reduce the detrimental Search engine marketing influence. There are 4 frequent points to start your assessment.

1. Get rid of Pointless Parameters

Picture created by writer

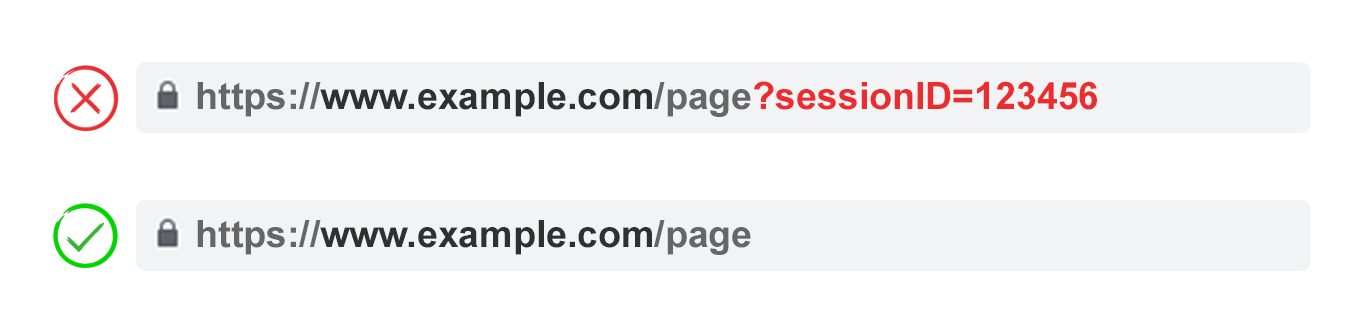

Picture created by writerAsk your developer for a listing of each web site’s parameters and their features. Likelihood is, you’ll uncover parameters that now not carry out a beneficial perform.

For instance, customers might be higher recognized by cookies than sessionIDs. But the sessionID parameter should exist in your web site because it was used traditionally.

Or you might uncover {that a} filter in your faceted navigation isn’t utilized by your customers.

Any parameters brought on by technical debt must be eradicated instantly.

2. Stop Empty Values

Picture created by writer

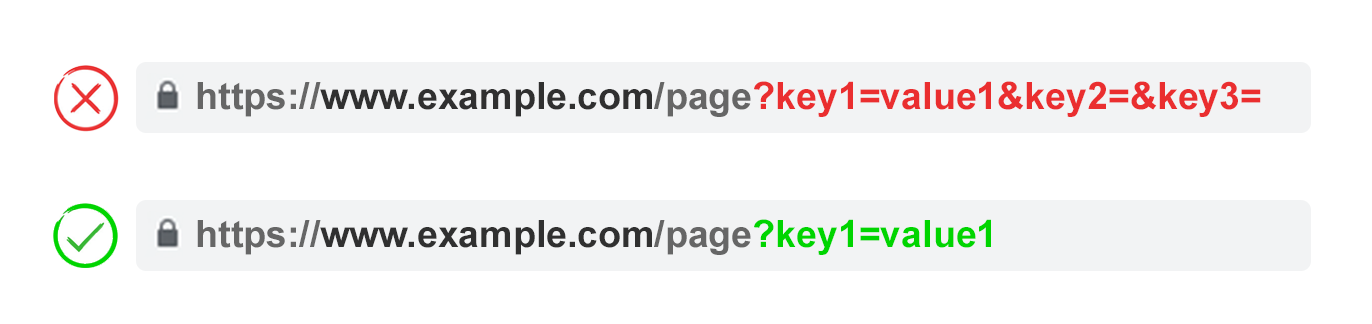

Picture created by writerURL parameters must be added to a URL solely after they have a perform. Don’t allow parameter keys to be added if the worth is clean.

Within the above instance, key2 and key3 add no worth, each actually and figuratively.

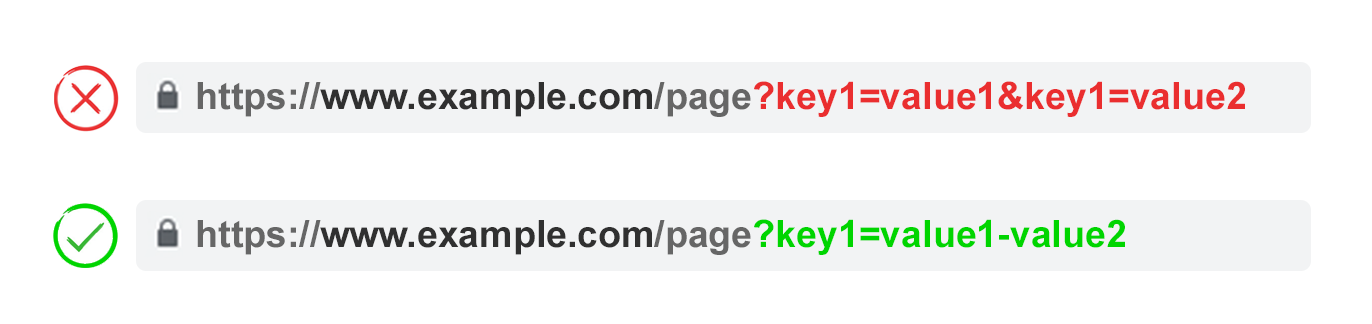

3. Use Keys Solely As soon as

Picture created by writer

Picture created by writerKeep away from making use of a number of parameters with the identical parameter identify and a special worth.

For multi-select choices, it’s higher to mix the values after a single key.

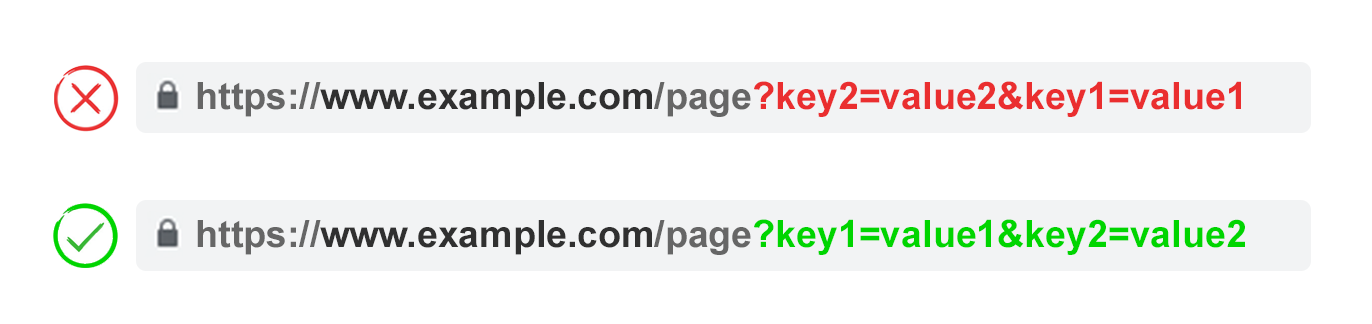

4. Order URL Parameters

Picture created by writer

Picture created by writerIf the identical URL parameter is rearranged, the pages are interpreted by search engines like google and yahoo as equal.

As such, parameter order doesn’t matter from a replica content material perspective. However every of these mixtures burns crawl funds and cut up rating alerts.

Keep away from these points by asking your developer to jot down a script to at all times place parameters in a constant order, no matter how the person chosen them.

For my part, you need to begin with any translating parameters, adopted by figuring out, then pagination, then layering on filtering and reordering or search parameters, and at last monitoring.

Professionals:

- Ensures extra environment friendly crawling.

- Reduces duplicate content material points.

- Consolidates rating alerts to fewer pages.

- Appropriate for all parameter sorts.

Cons:

- Reasonable technical implementation time.

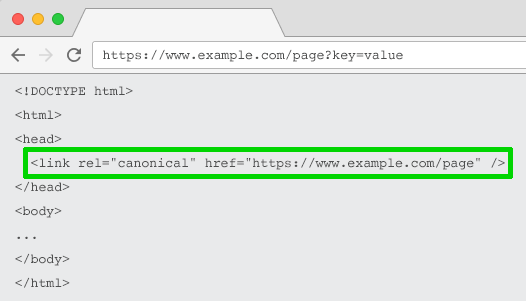

Rel=”Canonical” Hyperlink Attribute

Picture created by writer

Picture created by writerThe rel=”canonical” hyperlink attribute calls out {that a} web page has an identical or related content material to a different. This encourages search engines like google and yahoo to consolidate the rating alerts to the URL specified as canonical.

You’ll be able to rel=canonical your parameter-based URLs to your Search engine marketing-friendly URL for monitoring, figuring out, or reordering parameters.

However this tactic shouldn’t be appropriate when the parameter web page content material shouldn’t be shut sufficient to the canonical, reminiscent of pagination, looking, translating, or some filtering parameters.

Professionals:

- Comparatively simple technical implementation.

- Very more likely to safeguard towards duplicate content material points.

- Consolidates rating alerts to the canonical URL.

Cons:

- Wastes crawling on parameter pages.

- Not appropriate for all parameter sorts.

- Interpreted by search engines like google and yahoo as a robust trace, not a directive.

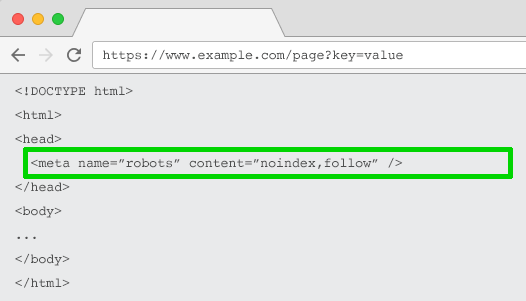

Meta Robots Noindex Tag

Picture created by writer

Picture created by writerSet a noindex directive for any parameter-based web page that doesn’t add Search engine marketing worth. This tag will stop search engines like google and yahoo from indexing the web page.

URLs with a “noindex” tag are additionally more likely to be crawled much less steadily and if it’s current for a very long time will finally lead Google to nofollow the web page’s hyperlinks.

Professionals:

- Comparatively simple technical implementation.

- Very more likely to safeguard towards duplicate content material points.

- Appropriate for all parameter sorts you don’t want to be listed.

- Removes present parameter-based URLs from the index.

Cons:

- Received’t stop search engines like google and yahoo from crawling URLs, however will encourage them to take action much less steadily.

- Doesn’t consolidate rating alerts.

- Interpreted by search engines like google and yahoo as a robust trace, not a directive.

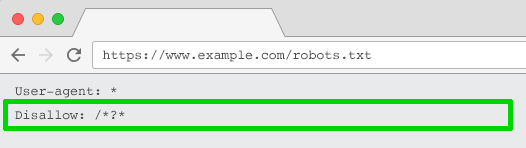

Robots.txt Disallow

Picture created by writer

Picture created by writerThe robots.txt file is what search engines like google and yahoo take a look at first earlier than crawling your web site. In the event that they see one thing is disallowed, they received’t even go there.

You need to use this file to dam crawler entry to each parameter based mostly URL (with Disallow: /*?*) or solely to particular question strings you don’t need to be listed.

Professionals:

- Easy technical implementation.

- Permits extra environment friendly crawling.

- Avoids duplicate content material points.

- Appropriate for all parameter sorts you don’t want to be crawled.

Cons:

- Doesn’t consolidate rating alerts.

- Doesn’t take away present URLs from the index.

Transfer From Dynamic To Static URLs

Many individuals assume the optimum solution to deal with URL parameters is to easily keep away from them within the first place.

In spite of everything, subfolders surpass parameters to assist Google perceive web site construction and static, keyword-based URLs have at all times been a cornerstone of on-page Search engine marketing.

To attain this, you need to use server-side URL rewrites to transform parameters into subfolder URLs.

For instance, the URL:

www.instance.com/view-product?id=482794

Would grow to be:

www.instance.com/widgets/purple

This strategy works effectively for descriptive keyword-based parameters, reminiscent of those who determine classes, merchandise, or filters for search engine-relevant attributes. It is usually efficient for translated content material.

Nevertheless it turns into problematic for non-keyword-relevant parts of faceted navigation, reminiscent of an actual worth. Having such a filter as a static, indexable URL provides no Search engine marketing worth.

It’s additionally a difficulty for looking parameters, as each user-generated question would create a static web page that vies for rating towards the canonical – or worse presents to crawlers low-quality content material pages every time a person has looked for an merchandise you don’t provide.

It’s considerably odd when utilized to pagination (though not unusual on account of WordPress), which might give a URL reminiscent of

www.instance.com/widgets/purple/page2

Very odd for reordering, which might give a URL reminiscent of

www.instance.com/widgets/purple/lowest-price

And is usually not a viable possibility for monitoring. Google Analytics is not going to acknowledge a static model of the UTM parameter.

Extra to the purpose: Changing dynamic parameters with static URLs for issues like pagination, on-site search field outcomes, or sorting doesn’t handle duplicate content material, crawl funds, or inner hyperlink fairness dilution.

Having all of the mixtures of filters out of your faceted navigation as indexable URLs usually leads to skinny content material points. Particularly for those who provide multi-select filters.

Many Search engine marketing execs argue it’s doable to supply the identical person expertise with out impacting the URL. For instance, through the use of POST fairly than GET requests to switch the web page content material. Thus, preserving the person expertise and avoiding Search engine marketing issues.

However stripping out parameters on this method would take away the chance in your viewers to bookmark or share a hyperlink to that particular web page – and is clearly not possible for monitoring parameters and never optimum for pagination.

The crux of the matter is that for a lot of web sites, fully avoiding parameters is just not doable if you wish to present the perfect person expertise. Nor wouldn’t it be greatest observe Search engine marketing.

So we’re left with this. For parameters that you just don’t need to be listed in search outcomes (paginating, reordering, monitoring, and so forth) implement them as question strings. For parameters that you just do need to be listed, use static URL paths.

Professionals:

- Shifts crawler focus from parameter-based to static URLs which have the next chance to rank.

Cons:

- Vital funding of improvement time for URL rewrites and 301 redirects.

- Doesn’t stop duplicate content material points.

- Doesn’t consolidate rating alerts.

- Not appropriate for all parameter sorts.

- Could result in skinny content material points.

- Doesn’t at all times present a linkable or bookmarkable URL.

Greatest Practices For URL Parameter Dealing with For Search engine marketing

So which of those six Search engine marketing techniques do you have to implement?

The reply can’t be all of them.

Not solely would that create pointless complexity, however usually, the Search engine marketing options actively battle with each other.

For instance, for those who implement robots.txt disallow, Google wouldn’t be capable of see any meta noindex tags. You additionally shouldn’t mix a meta noindex tag with a rel=canonical hyperlink attribute.

Google’s John Mueller, Gary Ilyes, and Lizzi Sassman couldn’t even determine on an strategy. In a Search Off The File episode, they mentioned the challenges that parameters current for crawling.

They even recommend bringing again a parameter dealing with software in Google Search Console. Google, in case you are studying this, please do convey it again!

What turns into clear is there isn’t one excellent resolution. There are events when crawling effectivity is extra vital than consolidating authority alerts.

In the end, what’s proper in your web site will rely in your priorities.

Picture created by writer

Picture created by writerPersonally, I take the next plan of assault for Search engine marketing-friendly parameter dealing with:

- Analysis person intents to grasp what parameters must be search engine pleasant, static URLs.

- Implement efficient pagination dealing with utilizing a ?web page= parameter.

- For all remaining parameter-based URLs, block crawling with a robots.txt disallow and add a noindex tag as backup.

- Double-check that no parameter-based URLs are being submitted within the XML sitemap.

It doesn’t matter what parameter dealing with technique you select to implement, you should definitely doc the influence of your efforts on KPIs.

Extra assets:

Featured Picture: BestForBest/Shutterstock